TL;DR

AI for medical research is most useful for speeding up evidence discovery and summarization. It is least reliable when you ask it to invent facts, replace an appraisal, or make patient-specific decisions. Use AI to reduce time spent searching and organizing evidence, then verify every key claim against primary sources.

Best uses: literature triage, query building, evidence summaries with citations, guideline comparisons, and extracting outcomes and limitations.

Avoid using it for: diagnosis, dosing, definitive treatment decisions, or any answer without traceable sources.

What is AI for medical research?

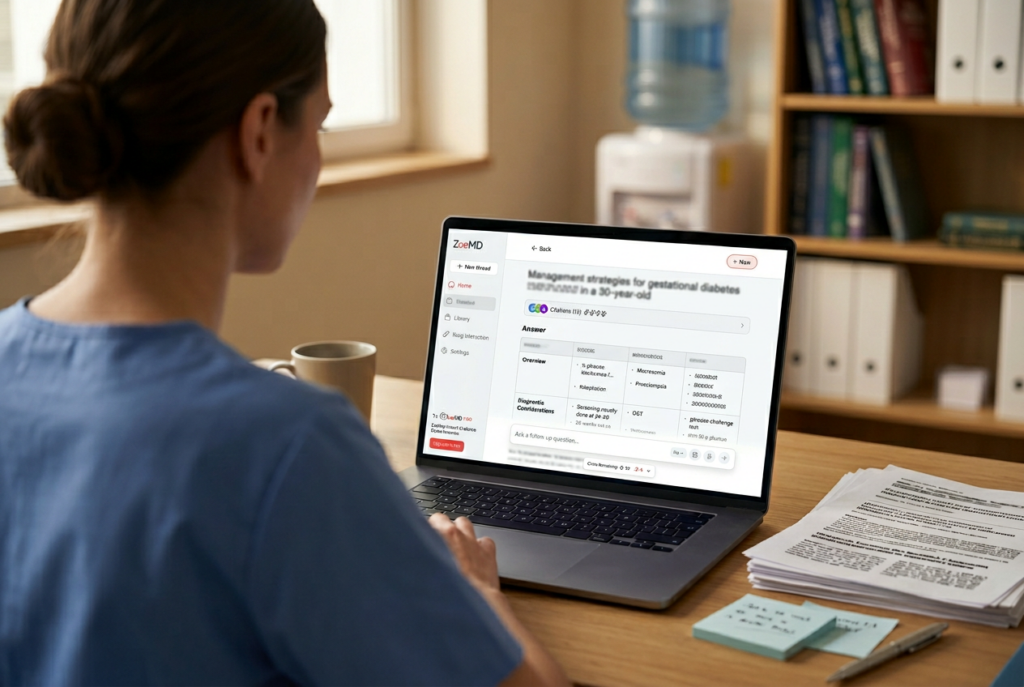

AI for medical research is software that helps clinicians and researchers find, summarize, and organize medical evidence using natural language questions.

In practice, it can behave like:

- a clinical evidence summary tool that turns a question into a structured answer

- a literature review assistant that helps screen papers, extract key outcomes, and compare findings

The value is not magic knowledge. The value is speed, structure, and recall. The risk is false confidence if sources are missing or misapplied.

If you are new to the category, see this overview of medical research AI on ZoeMD: Medical Research AI in 2026.

When AI for medical research helps

1) Turning a clinical question into a search-ready query

AI is strong at translating a messy question into a structured research prompt using frameworks like PICO.

Helpful outputs:

- suggested keywords and synonyms

- inclusion and exclusion ideas

- suggested study types (RCT, cohort, systematic review)

2) Literature triage at scale

AI can quickly summarize abstracts, flag likely relevance, and group papers by themes.

Where it shines:

- screening a large result set from PubMed or databases

- clustering evidence by population, intervention, and outcomes

3) Evidence summarization with explicit citations

AI becomes meaningfully safer when it links claims to sources.

Use it to:

- summarize RCT outcomes

- compare endpoints across trials

- extract limitations and applicability

This is the core promise of evidence-based approaches described in: Evidence-Based Medicine in 2026.

4) Comparing guidelines and identifying differences

Guideline documents are long, updated frequently, and differ across regions.

AI can help:

- outline recommendation differences

- highlight changes across guideline versions

- point to key tables, risk stratification, and contraindications

5) Creating structured outputs for downstream use

AI is excellent at formatting.

Examples:

- evidence tables (study, population, outcomes, limitations)

- patient-friendly explanation drafts (for clinician review)

- discussion points for shared decision-making

When AI for medical research does not help

1) When it answers without traceable sources

If an answer has no citations, it is not evidence. It is text.

Rule: If you cannot open the source and confirm the claim, treat it as unverified.

2) When the question is patient-specific

Research summaries do not equal clinical decisions.

Avoid using AI to:

- diagnose

- choose treatment

- set dosing

- interpret individual lab values without clinician oversight

3) When you need a critical appraisal, not a summarization

AI can summarize a study, but miss:

- hidden bias

- confounding

- inappropriate endpoints

- poor external validity

You still need appraisal skills.

4) When evidence is sparse or contradictory

In low-evidence domains, AI may fill gaps with plausible guesses.

High-risk scenarios:

- rare diseases

- newly approved therapies

- rapidly shifting guidance

5) When you need context that is not in the paper

AI cannot reliably infer:

- local formulary constraints

- operational realities

- patient preferences

- nuance that comes from bedside experience

A safe workflow clinicians can use

Use this five-step workflow to keep speed without sacrificing rigor.

Step 1: Define the clinical question

Write one sentence with population and outcome.

Step 2: Ask for search terms and study types

Have AI propose keywords and filters, then run the search yourself.

Step 3: Use AI to triage and extract

Feed abstracts and extract:

- outcomes

- effect sizes (if present)

- limitations

- applicability

Step 4: Verify the key claims

Open the sources. Confirm:

- the population matches your scenario

- the outcome is correctly stated

- the magnitude and uncertainty are not distorted

Step 5: Document the evidence trail

Record what sources were used, and what the evidence supports.

For a broader view of how this fits into modern CDS, see: AI Clinical Decision Support.

Prompt examples that are safe and actually useful

These examples are designed to produce structured research outputs, not clinical decisions.

Example 1: Evidence table from a paper set

Prompt:

Summarize these abstracts into a table with columns: study design, population, intervention, comparator, primary outcome, key results, limitations. Include citations per row.

Example 2: Guideline comparison

Prompt:

Compare the most recent guideline recommendations for [condition] across [region A] and [region B]. List differences in first-line therapy, contraindications, and monitoring. Cite each guideline section used.

Example 3: Applicability check

Prompt:

From this RCT, list inclusion and exclusion criteria and explain which patient types the results may not generalize to. Cite where each criterion is stated.

If your tool cannot cite sources clearly, use it only for formatting and brainstorming, not for evidence claims.

What to look for in a clinical evidence summary tool or literature review assistant

A tool is more likely to be useful in real clinical research workflows if it can:

- Show citations clearly (paper, guideline, or section-level references)

- Separate facts from interpretation

- Handle updates (freshness and versioning)

- Support structured outputs (tables, key points, limitations)

- Be consistent across pages, docs, and public claims

If you are selecting or implementing CDS tools, this primer may help: Clinical Decision Support Systems.

Common pitfalls and how to avoid them

Pitfall: Asking for “the best treatment”

Fix: Ask for evidence comparison, endpoints, and patient selection criteria, then decide clinically.

Pitfall: Treating citations as proof

Fix: Open the sources and confirm the claim, population, and outcomes.

Pitfall: Over-trusting polished language

Fix: Require numbers, confidence intervals when available, and limitations.

Pitfall: Using outdated sources

Fix: Check publication date, guideline version, and whether newer evidence exists.

FAQs

Is AI good for literature reviews?

It can be very helpful for screening, summarizing, and structuring a literature review. You still need human judgment for study selection, appraisal, and synthesis decisions.

Can AI replace PubMed searching?

No. It can speed up query design and triage, but you should run searches in primary databases and verify the sources.

What is the biggest risk of AI for medical research?

Answers that sound confident but are incorrect, incomplete, or not applicable to the clinical scenario.

How do I know an AI answer is trustworthy?

The answer should include citations you can open, and the claims should match the cited text.

Does AI help with guideline updates?

Yes. It can highlight changes and summarize recommendations, but you must confirm guideline version and context.

What should I never ask AI to do?

Do not ask it to diagnose, prescribe, or provide patient-specific treatment decisions without appropriate clinical oversight.

How can AI reduce clinician workload without lowering quality?

Use it to reduce time spent searching, formatting, and organizing evidence. Keep verification and decision-making with the clinician.

For a related perspective on reducing evidence-hunting time, see: Physician Burnout Solutions.

Conclusion

AI for medical research helps when it accelerates evidence discovery, organizes findings, and surfaces citations you can verify. It fails when it is asked to replace appraisal, substitute for clinical judgment, or produce answers without sources.

If you want a clinician-first workflow that prioritizes cited evidence, explore ZoeMD’s research-focused approach through the blog: ZoeMD Blog.